By Lee Flanagan

✨ AI Summary:

- Your current interview process likely fails to distinguish between genuine capability and AI-polished rehearsed answers.

- Interviewers must develop advanced, role-specific questioning and probing techniques that reveal depth of experience beyond surface-level narratives.

- Weak interview processes increase security and compliance risk by enabling fake or proxy candidates to infiltrate organizations.

- Invest in interviewer capability with real-time guidance, structured job-relevant criteria, and auditable records to safeguard hiring quality and governance.

Last quarter, three different enterprise TA leaders told us the same story. Different companies. Different industries. Same ending.

A senior technical hire. Impressive CV. Sharp in every round. Articulate, confident, technically fluent. The panel is unanimous: strong hire. Three months later, the team is carrying the weight. The demonstrated capability turns out to be surface-deep. A project stalls. A roadmap slips. The re-hire cycle begins. When you ask the interviewers what happened, the answer is always the same: “He seemed really confident and well-prepared.”

That’s the point. He was well-prepared. By AI. And your interviews weren’t designed to detect the difference.

This Is Not an Edge Case

If you think AI-assisted candidacy is a fringe problem, something happening to other companies in other industries, you’re not paying attention. This is now the default. Every candidate in your pipeline has access to tools that polish CVs, generate tailored cover letters, rehearse interview answers, and simulate entire interview scenarios with real-time feedback. The question is no longer whether candidates are using AI. It’s how much.

The data makes the trajectory painfully clear. Fabric’s analysis of over 50,000 candidates found that cheating adoption more than doubled in six months, from 15% in June 2025 to 35% by December 2025. That’s not a slow drift. That’s an inflection point. And Gartner predicts that by 2028, one in four candidate profiles worldwide will be fake. Not embellished. Fake.

When Google and McKinsey started reintroducing mandatory in-person interviews in 2025 specifically to counter AI interview fraud, that was not an overreaction. When two of the most analytically rigorous hiring organisations on the planet decide their virtual interview process can no longer distinguish real capability from rehearsed performance, the signal is impossible to ignore.

If you’re still running the same interview process you ran two years ago, you’re assessing candidates for a world that no longer exists.

Why Your Interviews Can’t Catch It

The problem isn’t that candidates are cheating. The problem is that your interviews were designed for a world where candidates prepared alone with a notebook and a friend willing to do a practice round. In that world, behavioural questions like “Tell me about a time when you dealt with conflict” were effective because the quality of the answer reflected the quality of the thinking. Genuine experience produced nuanced, specific, imperfect stories. Rehearsed answers felt rehearsed.

That distinction has collapsed. A candidate who spends thirty minutes with ChatGPT will produce a STAR-format answer that is specific, structured, and indistinguishable from genuine experience. They’ll have practised it until the delivery feels natural. They’ll have anticipated your follow-ups. As MIT Sloan Management Review noted, AI interview preparation tools don’t just help candidates rehearse answers. They help candidates construct entirely plausible narratives around experiences they may never have had.

This is not a technology problem. It’s a capability problem. Your interviewers are running surface-level assessments against candidates who have been trained to perform at surface level with extraordinary polish. The interview rewards plausibility, and AI delivers plausibility at scale. If your hiring managers are still evaluating candidates based on how articulate and confident they sound, they are measuring exactly the thing AI is best at producing.

Here’s the challenge: when was the last time your organisation invested in upgrading interviewer capability at the same pace candidates have upgraded theirs?

This Is a Security Problem, Not Just a Talent Problem

Most TA leaders we speak to still frame this as a hiring quality issue. Understandable. But the risk categories extend far beyond a bad hire. When your interview process can’t reliably verify who someone is and what they can actually do, you’re creating attack surface.

This isn’t theoretical. The FBI has issued repeated warnings about state-sponsored actors using AI-generated identities, deepfake video, and proxy interviewers to infiltrate Western companies through their hiring process. CrowdStrike reported a 220% increase in the number of companies infiltrated by North Korean threat actors over just twelve months, with over 320 organisations compromised in a single year. These aren’t amateur operations. They use stolen credentials, synthetic identities, AI-generated communications, and live interview proxies to pass through standard hiring processes and gain access to internal systems, proprietary code, and client data.

Checkr’s survey of 3,000 hiring managers found that one in three had already discovered a candidate using a fake identity or a proxy during an interview. One in three. And those are only the ones that were caught.

Ask your CISO whether your interview process is part of their threat model. If it isn’t, it should be. Every hire that passes through a weak interview process without genuine capability verification is a governance failure. Not just a talent failure. A governance failure with security, compliance, and reputational consequences.

The organisations that take this seriously are the ones connecting their TA, legal, and infosec teams around a shared understanding: the interview is no longer just an assessment. It’s a frontline defence.

What Advanced Interviewing Actually Looks Like

The good news is that this problem is solvable. AI-assisted candidates are optimised for surface-level performance. They struggle when interviews are designed to go deeper.

Consider two interviewers, same candidate, same role. Interviewer A asks: “Tell me about a time you led a complex technical migration.” The candidate delivers a flawless three-minute answer. Clear structure, specific metrics, confident delivery. Interviewer A is impressed. Interviewer B asks the same question, gets the same polished answer, and then follows up: “Walk me through how you actually scoped that migration. What was the first thing you did when you inherited the project? What broke that you didn’t expect? What would you do differently?” The candidate hesitates. The specificity dissolves. The follow-ups expose what the surface answer concealed: the candidate can describe the work, but they didn’t do the work.

That’s the difference between surface-level interviewing and advanced interviewing. Advanced means role-specific criteria tied to on-the-job success, not generic competency questions. Predictive questions built around the specific context of the role that can’t be scripted in advance. Follow-up probing that tests depth, not breadth. Pivot questions and work-sample scenarios that make it significantly harder for a candidate to sustain a fabricated narrative. And consistent structure across interviewers, so you can spot inconsistencies across rounds that a single interviewer would miss.

The stakes of getting this wrong are not abstract. SHRM and the U.S. Department of Labor estimate the cost of a bad hire at between $17,000 for entry-level roles and $240,000 or more for senior positions. For high-impact, high-access roles, the true cost (delayed delivery, team disruption, security exposure, the re-hire cycle) is often multiples of that number. And 74% of employers admit they’ve made the wrong hiring decision.

The irony is that most organisations have invested heavily in the front end of the hiring funnel: sourcing, screening, employer brand. They’ve invested almost nothing in the capability of the people who make the actual decision. Your interviewers are the last line of defence. How well-equipped are they?

The Governance Question Nobody’s Asking

This is no longer just a TA conversation. It’s a boardroom one.

If your interviewers can’t reliably assess candidates, every hire is an unmanaged risk. Not just a performance risk. A compliance risk. A security risk. A risk your legal, infosec, and AI strategy teams should be asking about. And increasingly, they are.

In every conversation we’re having with TA leaders right now, the same pattern surfaces: as organisations evaluate their hiring processes, the Heads of Tech, AI Strategy, and Legal are entering the room. They’re asking hard questions about governance, defensibility, and oversight. They want to know that the interview process is structured, auditable, and designed to catch what surface-level assessment misses. They want evidence that interviewer capability is being actively managed, not assumed. And they want to know what happens when something goes wrong. Not just “we’ll re-hire.” What was the process? Was it consistent? Can you demonstrate it?

What these organisations need is not another training programme that hiring managers attend once and forget. They need a system that embeds hiring expertise into the interview itself. Role-specific criteria that reflect what success actually requires in this role. Structured, predictive questions that go beyond what a candidate can rehearse. In-the-moment guidance that supports the interviewer while the conversation is happening, not after it’s over. And feedback that improves interviewer capability with every interview, creating a cycle of continuous improvement rather than periodic intervention.

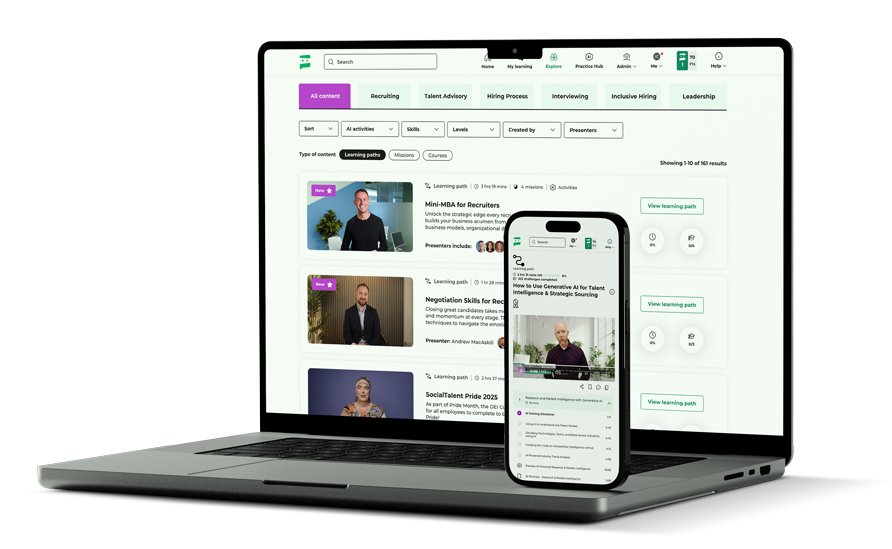

This is what Cara, SocialTalent’s AI hiring assistant, is built to do. Cara doesn’t evaluate candidates. It evaluates interviewers. It guides them in the moment with role-specific preparation, structured questions, and real-time support designed to surface the kind of evidence that AI-polished candidates can’t fake.

When evidence gathering is weak, Cara identifies the gap and delivers targeted coaching. When an interviewer asks a non-job-related question, Cara flags it. The result is interviewers who get measurably better with every interview, and outputs that are neutral, job-related, and bias-aware. The kind of defensible, auditable interview record that legal and compliance teams increasingly require.

The Question You Need to Answer

Go back to the senior technical hire from the top of this piece. The polished CV. The confident performance in every round. The unanimous panel decision. The three-month failure.

That scenario is not hypothetical. It is playing out at enterprises right now. The only variable is how long it takes to discover. Some organisations catch it in months. Others absorb the cost for a year before the pattern becomes undeniable.

AI-assisted candidates are already in your pipeline. Some of them are misrepresenting their capabilities. The only question is whether your interviewers, today, are equipped to tell the difference between genuine capability and rehearsed plausibility. Between someone who has done the work and someone who has learned to describe it convincingly.

How confident are you that your interviewers would have caught it?