Extended definition

AI in recruiting moved from emerging category to standard tooling between roughly 2020 and 2025. By 2026 it spans most stages of the hiring process — Boolean string generation, resume parsing, candidate matching, outreach drafting, interview scheduling, transcript-and-scorecard analysis, and content generation for job descriptions.

The technology range is wide. Older statistical models still handle resume parsing and basic candidate matching.

Modern large language models handle outreach writing, interview transcript analysis, and conversational candidate experience. The strongest TA functions use AI to augment recruiter judgment rather than replace it; the ones treating AI as a recruiter substitute typically produce worse outcomes than well-supported humans, especially on consequential decisions like screening and selection.

What AI does in recruiting today

A modern TA stack typically uses AI across six functions:

- Sourcing assistance — AI generates Boolean strings, suggests target profiles, ranks sourced candidates against job criteria, and drafts personalised outreach. Most modern sourcing platforms (SeekOut, HireEZ, Gem) ship AI features as standard.

- Resume parsing and matching — AI extracts structured data from CVs and matches candidates against role requirements at scale. Used heavily in high-volume hiring; common in most ATSes.

- Interview scheduling — Conversational AI handles candidate scheduling, reminder management, and rescheduling. Reduces recruiting coordinator workload significantly when implemented well.

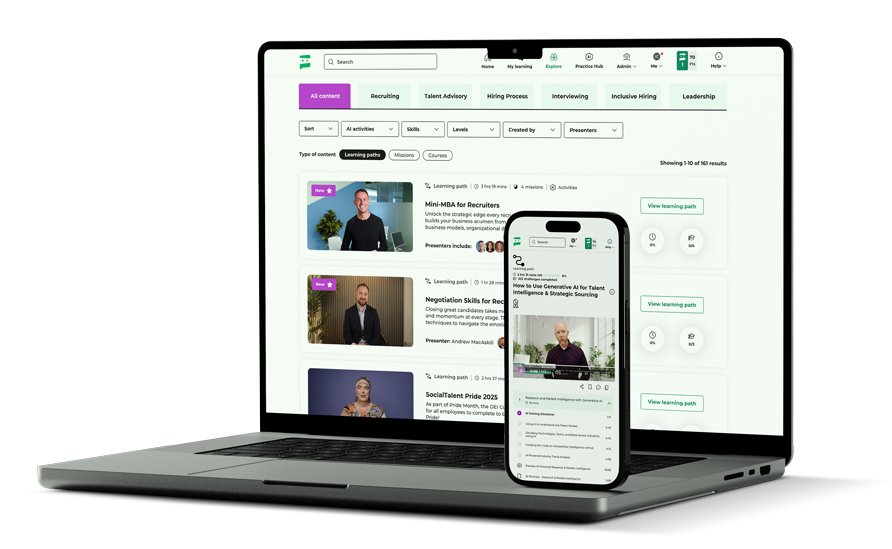

- Interview intelligence — AI transcribes interviews, surfaces scorecard prompts in real time, analyses competency coverage, and flags calibration drift. Examples include SocialTalent’s Interview Intelligence platform.

- Content generation — AI drafts job descriptions, candidate summaries, rejection messages, and offer letters. Used to accelerate work; reviewed by humans before going to candidates.

- Decision support — AI surfaces patterns in candidate data, flags potential bias indicators, and provides analytical input to hiring decisions. Used as input to human judgment rather than as a replacement for it.

The regulatory landscape matters. The EU AI Act classifies most hiring AI as “high-risk” with specific obligations on transparency, bias monitoring, and human oversight.

New York City Local Law 144 requires bias audits of automated employment decision tools. Several other US jurisdictions have passed or are considering similar requirements.

Practitioners deploying AI in hiring need current compliance guidance.

Why AI in recruiting matters

AI changes the unit economics of recruiting. Tasks that previously required hours of recruiter time — sourcing list generation, outreach drafting, interview transcription — now take minutes.

The capacity gains let recruiters spend more time on the work that requires judgment: candidate conversations, hiring manager partnership, complex screening decisions. For TA leaders, AI investment over the past several years has typically produced meaningful productivity gains alongside new operational considerations — bias monitoring, regulatory compliance, candidate-facing AI disclosure.

The value compounds when AI is deployed as augmentation; it deteriorates when AI is positioned as recruiter replacement.

Common mistakes and misconceptions about AI in recruiting

- Treating AI as recruiter replacement — The strongest results come from AI augmenting recruiter judgment. Companies that try to replace recruiters with AI typically produce worse hire quality and worse candidate experience than well-supported humans.

- Skipping bias and compliance review — Most jurisdictions now have specific obligations for AI used in hiring decisions — EU AI Act, NYC Local Law 144, and others. Deploying without compliance review creates regulatory exposure.

- Hiding AI use from candidates — Most regulatory frameworks require disclosure when AI is used in candidate evaluation. Beyond compliance, hidden AI use damages candidate experience when discovered.

- Buying AI tools without measuring outcomes — AI investments need outcome metrics — time-to-fill changes, candidate experience scores, hire quality, recruiter productivity. Tools deployed without measurement often get retained on inertia rather than evidence.

- Assuming all AI is equivalent — Resume parsing models built for high-volume processing differ significantly from large language models doing outreach drafting differ from interview intelligence platforms doing transcript analysis. Different categories need different evaluation.

Frequently asked questions

What is AI in recruiting?

AI in recruiting is the use of machine learning, large language models, and related technologies to assist or automate parts of the hiring process — sourcing, screening, scheduling, interview support, and decision-making. It's now embedded in most modern TA stacks. By 2026 it spans most stages of the hiring process — Boolean string generation, resume parsing, candidate matching, outreach drafting, interview scheduling, transcript-and-scorecard analysis, and content generation for job descriptions.

Is AI in recruiting legal?

Yes, but with specific obligations in most jurisdictions. The EU AI Act classifies most hiring AI as high-risk with transparency, bias monitoring, and human oversight requirements. New York City Local Law 144 requires bias audits of automated employment decision tools. Other US states and countries have passed or are developing similar frameworks. Practitioners need current jurisdiction-specific guidance.

What can AI do better than human recruiters?

Volume tasks — resume parsing at scale, Boolean string generation, outreach drafting, transcript transcription, scheduling logistics. Tasks that benefit from speed, consistency, and tireless repetition. AI is materially weaker at judgment calls — assessing motivation, reading a candidate's situation, navigating a difficult conversation — where humans still substantially outperform.

Should AI make hiring decisions?

Most regulatory frameworks limit AI's role to providing input to human decisions rather than making decisions autonomously. Beyond compliance, AI alone tends to produce worse hire quality and significant bias risk on consequential decisions. The strongest deployments use AI as decision support with humans retaining final judgment.

How do you measure whether AI in recruiting is working?

Through productivity metrics (time-to-fill changes, recruiter capacity gains), quality metrics (hire quality, retention), candidate experience metrics (NPS, dropout patterns), and bias metrics (demographic outcome monitoring). AI investments without outcome measurement often get retained on inertia rather than evidence.